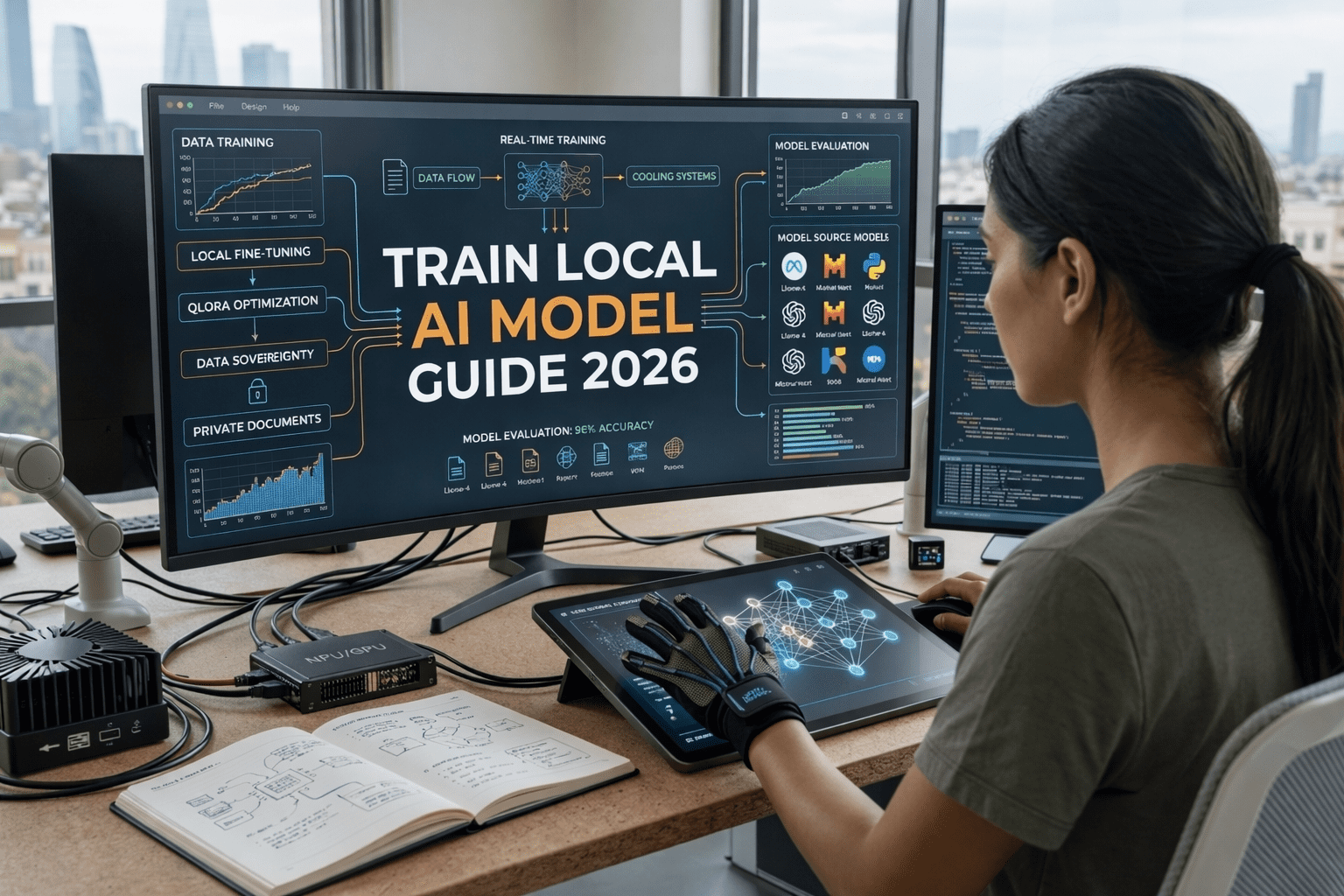

The landscape of artificial intelligence has shifted dramatically over the last few years. If 2024 was the year of the cloud chatbot and 2025 was the year of the agent, then 2026 is officially the year of local execution. We have reached a point where relying solely on third-party servers isn’t just a privacy concern—it’s a bottleneck for performance and customizability. This train local AI model guide 2026 is designed for those who want to take the keys back from big tech and build something truly their own.

Why go local now? Because the hardware has finally caught up with the math. With the release of specialized NPU (Neural Processing Unit) architectures in consumer laptops and massive VRAM jumps in desktop GPUs, the barriers to entry have crumbled. If you’ve followed our best AI tools for productivity 2026 ultimate guide, you already know how much time these tools save. But to reach the next level, you need a model that knows your specific data without ever leaking it to the internet.

The Evolution of Local Intelligence

The push for local models isn’t just about being a “prepper” in the digital age. It’s about latency and precision. When you follow a train local AI model guide 2026, you are essentially creating a digital twin of your own knowledge base. Whether you are a developer, a researcher, or a business owner, the ability to fine-tune a Llama-4 or a Mistral-3 model on your private documents is a superpower.

In the past, training was a task reserved for massive server farms. Today, techniques like QLoRA (Quantized Low-Rank Adaptation) allow us to squeeze incredible performance out of modest hardware. This train local AI model guide 2026 focuses on these efficient methods, ensuring you don’t need a $10,000 rig to get professional results. We are moving away from general-purpose AI and toward specialized, local “experts.”

Hardware Requirements: The 2026 Standard

Before we dive into the code, we need to talk about silicon. You cannot expect to run a high-end training pipeline on a machine from 2022. For a successful train local AI model guide 2026 implementation, VRAM (Video RAM) is your most important metric.

- Minimum: 16GB VRAM (RTX 5070 equivalent or Mac M4 with 32GB Unified Memory).

- Recommended: 24GB+ VRAM (RTX 5090 or Mac M4 Max).

- Storage: At least 1TB of NVMe Gen5 SSD space for rapid data shuffling.

If you are setting up your environment for the first time, I highly recommend checking out our tutorial on how to setup cursor for large-scale repositories to manage your training scripts effectively. High-quality hardware is useless if your environment is cluttered and unoptimized.

Software Stack for 2026

The software side of this train local AI model guide 2026 has become much more user-friendly. We no longer have to fight with broken dependency chains for days. The modern stack relies on containerized environments and high-level libraries that talk directly to the hardware.

- Ollama & LM Studio: For initial model testing and inference.

- PyTorch 3.0: The backbone of modern machine learning.

- Axolotl: A powerhouse library that simplifies the fine-tuning process.

- Hugging Face Transformers: For accessing the latest weights.

You can find the latest open-source weights on Hugging Face, which has become the de facto library for the global AI community. When you start your journey with this train local AI model guide 2026, your first stop will almost always be their model hub.

Comparative Analysis: Local vs. Cloud Training

To help you decide if local training is right for your project, I’ve outlined the key differences in 2026.

| Feature | Local (2026) | Cloud (API) |

|---|---|---|

| Data Privacy | Absolute (Offline) | Subject to TOS |

| Initial Cost | High (Hardware) | Low (Pay-per-use) |

| Latency | Ultra-Low | Variable (Network) |

| Customization | Full Control | Limited by Provider |

Preparing Your Dataset: The Golden Rule

Any train local AI model guide 2026 worth its salt will tell you the same thing: Garbage in, garbage out. The reason most people fail at fine-tuning isn’t the code; it’s the data. You need structured, clean, and relevant examples.

If you are training a model to help with your personal health or research, you might find inspiration in our AI biohacking trends 2026 ultimate incredible guide. The way you organize your personal biometric data for an AI to analyze requires a very specific format (usually JSONL).

- Cleaning: Remove duplicates and irrelevant fluff.

- Formatting: Use the Alpaca or ShareGPT format for best compatibility with 2026 models.

- Diversity: Ensure your model sees different ways of asking the same question.

Step-by-Step Execution

Once your hardware is humming and your data is clean, it’s time to actually pull the trigger on the training process. This train local AI model guide 2026 uses the LoRA method because it is the most accessible for individual users.

Step 1: Environment Setup

Start by creating a clean virtual environment. I prefer using Conda or Docker to keep things isolated. You’ll need to install the latest NVIDIA CUDA Toolkit to ensure your GPU is talking to your software at maximum speed. This is a foundational step in any train local AI model guide 2026.

Step 2: Selecting the Base Model

For most users in 2026, the Llama-4 8B or Mistral-Next models are the perfect balance of size and intelligence. They fit comfortably in 16GB-24GB of VRAM during the fine-tuning process when using 4-bit quantization.

Step 3: Configuring the Training Hyperparameters

This is where the magic (and the frustration) happens. You’ll need to set your learning rate, batch size, and “rank.” A higher rank allows the model to learn more complex patterns but requires more VRAM. This train local AI model guide 2026 recommends starting with a Rank of 16 and an Alpha of 32 for general knowledge tasks.

If you are planning to deploy this as part of a larger system, make sure to read our how to build automated AI workflow guide 2026 to see how your new local model can trigger other actions within your network.

Step 4: Monitoring the Loss Curve

While the model trains, you’ll see a “loss” value. You want this to go down over time, but not too fast. If it hits zero instantly, you’ve overfitted—your model has just memorized the data rather than learning it. A balanced train local AI model guide 2026 approach involves checking the model’s output every 100 steps to ensure it still sounds “human” and hasn’t become a parrot.

Connecting to Your Agentic Future

Training the model is only half the battle. To make it useful, you need to connect it to your existing tools. This train local AI model guide 2026 is just one piece of the puzzle. Once you have your weights, you can use them in a private API server to power your own custom agents.

We cover the next steps in our connect AI agents tutorial, which explains how to bridge the gap between a standalone model and a functional assistant that can actually “do” things. Having a local model that can interact with your local files safely is the ultimate goal of the train local AI model guide 2026.

Troubleshooting Common Issues

Even with a perfect train local AI model guide 2026, things can go wrong. Here are the most common 2026-specific hurdles:

- OOM (Out of Memory) Errors: Usually caused by a batch size that is too high. Drop your batch size or enable “Gradient Checkpointing.”

- Model Hallucinations: Often a sign that your training data wasn’t diverse enough or your learning rate was too high.

- Driver Mismatch: Ensure your PyTorch version matches your CUDA drivers exactly.

If you find that your model is struggling to understand complex logic, it might be worth comparing its performance against closed-source giants. Take a look at our how to use Claude 3.5 Sonnet Artifacts 2026 to see what the “gold standard” looks like and where your local model might need more fine-tuning.

The Ethical Side of Local AI

As we wrap up this train local AI model guide 2026, we have to discuss the responsibility that comes with it. When you own the model, you own the guardrails. There are no corporate filters to stop the AI from generating biased or incorrect information.

Data sovereignty is a double-edged sword. While it protects your privacy, it also means the quality and safety of the output are 100% on you. This train local AI model guide 2026 encourages a “Human-in-the-Loop” philosophy. Never let a locally trained model make critical decisions without a final human check.

Future Outlook: Beyond 2026

The speed of innovation suggests that by 2027, the train local AI model guide 2026 will be seen as the “early days” of personal intelligence. We are moving toward a world where your phone might carry a scaled-down version of your custom-trained model, syncing updates whenever you are near your main workstation.

The ultimate aim of the train local AI model guide 2026 is to foster independence. In a world where every click and prompt is monitored and monetized, having a private space for your thoughts and data is the most rebellious and productive thing you can do.

Conclusion: Taking the First Step

Mastering the train local AI model guide 2026 is not an overnight process. It requires patience, a bit of trial and error, and a willingness to break things. But the reward—a personalized, private, and powerful intelligence that answers only to you—is worth every minute of setup time.

If you are still on the fence about whether you need a custom model, start small. Use the tools mentioned in our best AI tools for productivity 2026 ultimate guide first. Once you hit the limits of what those tools can do with your specific data, come back to this train local AI model guide 2026 and start building your own.

The era of big tech’s monopoly on intelligence is ending. With the right hardware, a clean dataset, and the steps outlined in this train local AI model guide 2026, the future of AI is truly in your hands. Happy training!