There is a specific kind of chill that runs down your spine when you realize you just pasted a sensitive client contract or a private business strategy into a cloud-based AI. In 2024, we didn’t think much about it. But in 2026, data sovereignty is no longer a luxury—it’s a requirement. I remember the exact moment I made the switch: I was working on a proprietary algorithm and realized that, by using a web-based chatbot, I was essentially “gifting” my intellectual property to a tech giant.

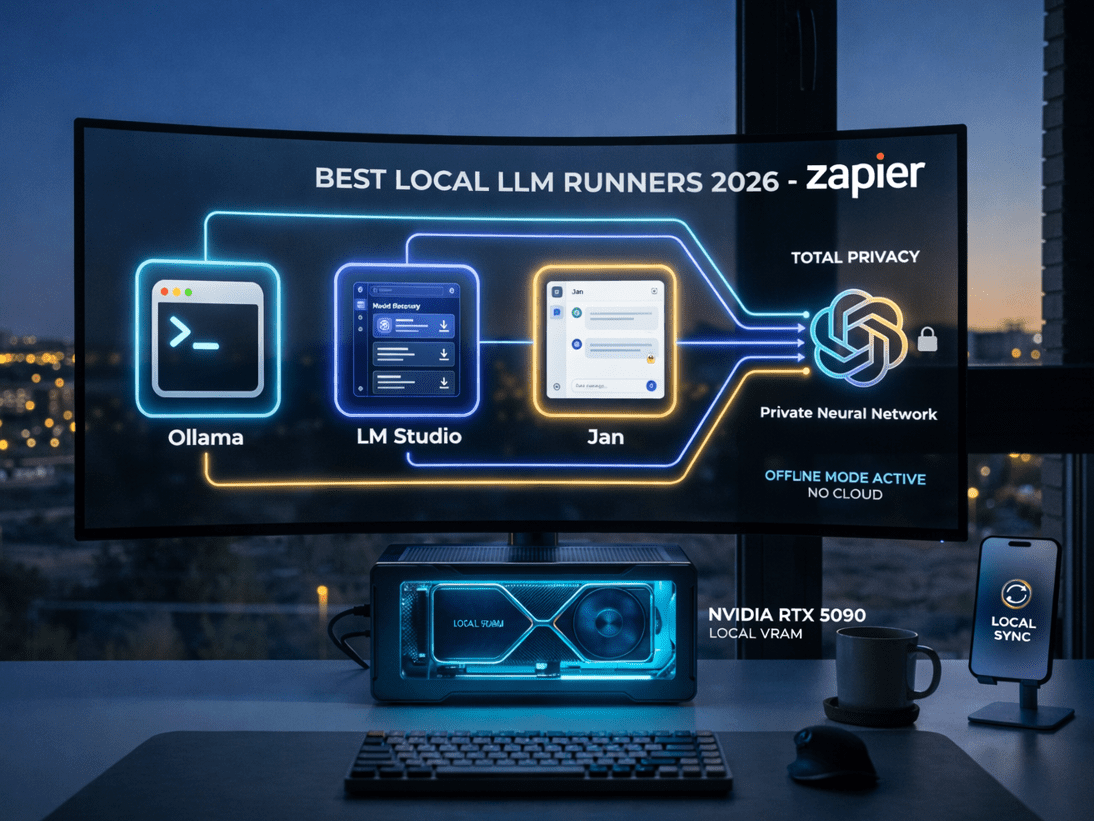

If you are tired of wondering where your data goes, you need to look into the Best Local LLM Runners 2026. Running Large Language Models (LLMs) locally on your own hardware used to be for developers only. Today, thanks to tools like Ollama, LM Studio, and Jan, anyone with a decent laptop can have a private, offline AI assistant.

In this comprehensive guide, we are going to compare these three titans to find out which one deserves a spot on your machine as one of the Best Local LLM Runners 2026.

Why Local AI is Winning in 2026

The trend is clear: the “Cloud-Only” era is fading. The Best Local LLM Runners 2026 offer three things that ChatGPT or Claude simply cannot: Total Privacy, Zero Latency, and No Subscription Fees.

When you run an AI locally, your data never leaves your RAM. There is no “Terms of Service” that allows a company to train on your thoughts. As we’ve discussed when building a Second Brain with AI, the bridge between having information and keeping it secure is critical. This is why choosing among the Best Local LLM Runners 2026 is the first step for any serious professional.

1. Ollama: The Power of the Command Line (Simplified)

Ollama has become the “Gold Standard” for those who want efficiency. It’s a lightweight, powerful tool that lives in your terminal but offers a very simple way to manage models. What makes it one of the Best Local LLM Runners 2026 is its incredible library. With one command, you can download Llama 4, Mistral, or Phi-4.

The beauty of Ollama is its “Model File” system, which allows you to customize how the AI behaves before you even start talking to it. If you have followed our guide on how to Automate Inbox Zero with AI Agents, you’ll find that Ollama is the perfect backend to serve those agents locally, ensuring your emails stay 100% private. This versatility is why it remains at the top of the Best Local LLM Runners 2026 list for power users.

2. LM Studio: The Ultimate Discovery Hub

For most users, LM Studio is the entry point. It provides a beautiful, “Apple-like” interface where you can search the entire Hugging Face repository and download models with a single click. It’s considered one of the Best Local LLM Runners 2026 because it visualizes your hardware usage—showing you exactly how much GPU memory (VRAM) you are using.

The standout feature of LM Studio in 2026 is its “Local Server” mode. You can run a model in LM Studio and then point other apps to it. It’s like creating your own private OpenAI API. For anyone who values a polished UI and easy model discovery, LM Studio is the most user-friendly of the Best Local LLM Runners 2026.

3. Jan: The Open-Source “ChatGPT” Clone

Jan is the dark horse that has taken the community by storm. It’s an open-source alternative that looks and feels exactly like ChatGPT, but it runs entirely on your machine. Why is it among the Best Local LLM Runners 2026? Because of its “Extension” ecosystem.

Jan allows you to plug in different “engines.” If you want to use your local GPU, you can. If you want to connect to a remote server for a massive model, you can do that too. It’s built with a “Local-First” philosophy that matches the security needs of 2026. For those who want the familiar chat interface without the cloud privacy risks, Jan is a top-tier choice among the Best Local LLM Runners 2026.

Comparative Analysis: Local LLM Software

Hardware Requirements: Can Your PC Handle It?

You can’t talk about the Best Local LLM Runners 2026 without talking about hardware. To get a smooth experience (at least 30 tokens per second), you generally need:

- Silicon Mac (M2/M3/M4 Max): The unified memory architecture makes Macs the kings of local AI.

- NVIDIA GPUs (RTX 4090/5090): For Windows users, VRAM is the most important metric. You need at least 12GB to run a decent 7B or 14B model comfortably.

- RAM: 32GB is the new “minimum” for a professional setup using the Best Local LLM Runners 2026.

The good news is that the Best Local LLM Runners 2026 have become incredibly efficient at “quantization” (shrinking models), meaning you can now run very smart AIs on mid-range laptops that would have melted two years ago.

Step-by-Step: Setting Up Your Private AI

If you’re ready to reclaim your privacy, follow this simple path using the Best Local LLM Runners 2026:

Step 1: Download and Install

I recommend starting with LM Studio if you want a GUI, or Ollama if you want something that runs in the background. Both are free and take less than 5 minutes to install.

Step 2: Choose Your Model

Look for models like Llama-3-8B (for general chat) or Mistral-Nemo (for reasoning). These are perfectly optimized for the Best Local LLM Runners 2026.

Step 3: Configure Context

Give your local AI a “System Prompt.” Tell it who you are and what your privacy expectations are. Unlike cloud AIs, a local runner will never “forget” these constraints to satisfy a corporate safety filter. This is the ultimate freedom provided by the Best Local LLM Runners 2026.

Privacy Deep Dive: Why “Local-First” Matters

In 2026, we’ve seen the rise of “Shadow AI”—employees using cloud tools against company policy because they need the help, but risking data leaks in the process. By implementing the Best Local LLM Runners 2026, companies can provide AI power to their teams with zero risk.

As we noted in our Apple Intelligence vs Microsoft Copilot comparison, even the giants are moving toward “On-Device” processing. But while they still have a “backdoor” to the cloud, the Best Local LLM Runners 2026 give you the “kill switch.” You can literally unplug your internet and your AI will keep working. That is true digital sovereignty.

Frequently Asked Questions (FAQ)

Are local models as smart as GPT-4? In 2026, yes. Models like Llama-4-70B running on the Best Local LLM Runners 2026 can match or exceed GPT-4 in most logic and coding tasks.

Is it totally free to use the Best Local LLM Runners 2026? The software is free and the models are open-source. Your only cost is the electricity and the initial hardware investment. No monthly fees.

Can I use these tools for coding? Absolutely. Ollama and LM Studio both allow you to integrate your local AI directly into editors like Cursor or VS Code, providing a private alternative to GitHub Copilot.

My Honest Take: The Future is Decentralized

We spent a decade moving everything to the cloud, and now we are realizing the cost: our privacy and our control. The Best Local LLM Runners 2026 aren’t just cool gadgets for nerds; they are the tools of liberation for the modern digital worker.

If you value your ideas, don’t feed them to a cloud that doesn’t care about you. Download one of the Best Local LLM Runners 2026 today. Start with a small model, feel the speed of an offline response, and enjoy the peace of mind that comes with knowing your “Third Brain” is safely sitting on your desk, and nowhere else.